At the beginning, my starter prompt felt like it could work, but I had a feeling the response would be too broad. I typed in “tell me about World War 1” and the response was a general overview that covered everything at a surface level without really getting into any real meaning or depth. It hit the basics like dates and major battles, but it wasn’t the kind of response that would actually help someone understand the war.

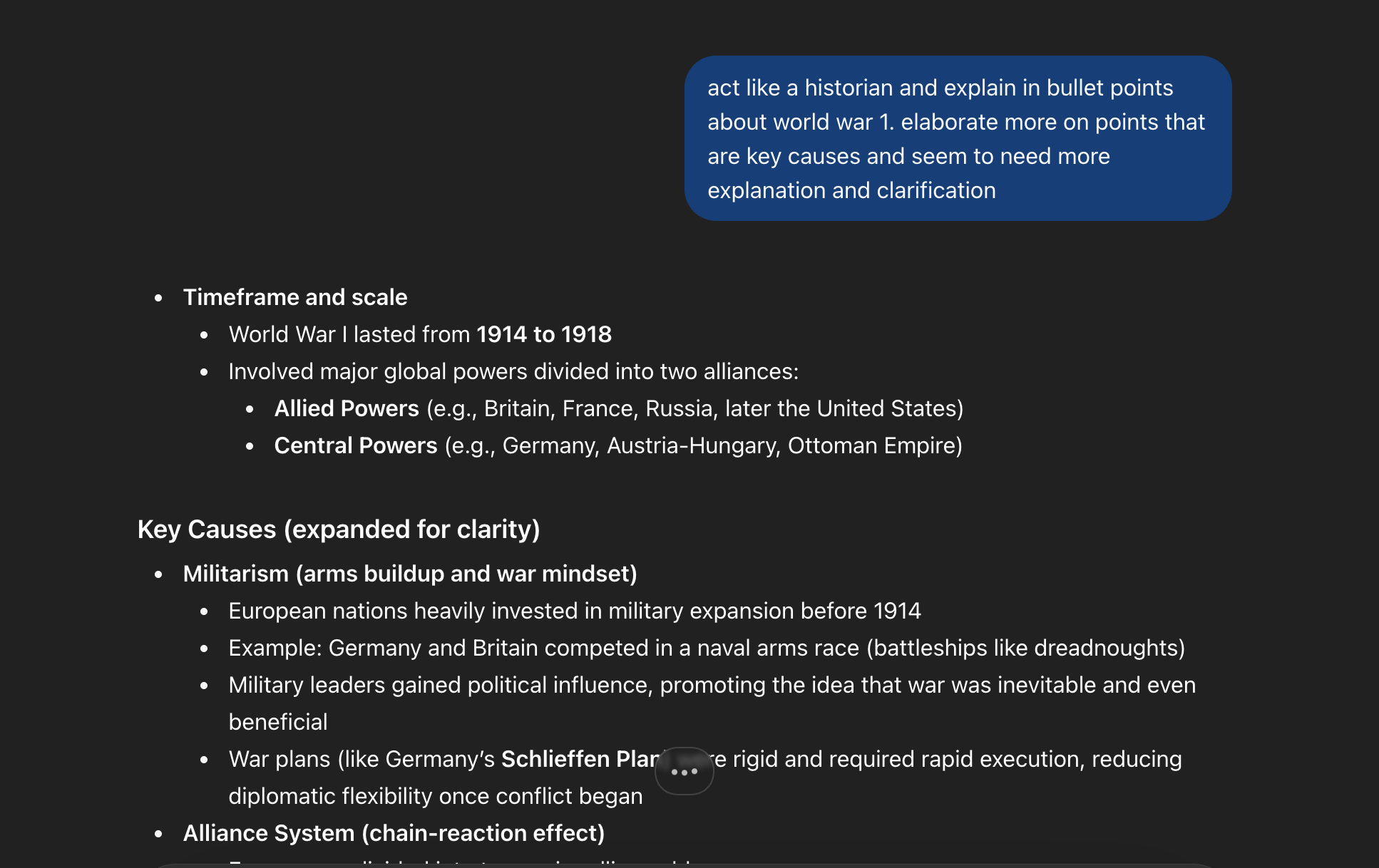

The prompt that really helped was the one where I asked it to be a historian and to focus on the key causes and events of the war. This really changed the answer. The answer was more in depth and really went into the causes of the war. The main causes were militarism, alliances, imperialism, and nationalism. The answer really went into the reasons why these were the causes of the war.

Overall, I learned that AI is good at giving detailed, useful information, but only when you tell it exactly what you want. The first prompt gave me something generic because I gave it nothing to work with. Once I added structure and explicit directions, the quality jumped significantly. As the OpenAI Academy reading states, “prompt engineering is the process of designing and refining your input in a way that helps ChatGPT give the best possible answer” (OpenAI Academy, 2025). In order to get good results, the user has to be intentional with how they frame their prompts, and the more specific the instructions, the better and more focused the output will be.

OpenAI Academy. (2025). Prompting. https://academy.openai.com/public/clubs/work-users-ynjqu/resources/prompting

I’ve found with all LLMs I’ve used is if you continue to update your prompt with prompt patterns, it enables the best possible output from the LLM and being the most useful to what you are trying to get the LLM to produce for you.