Bad prompting example:

I was using Google Gemini to revise and reformat text for a formal technical report for my Physics 2 Lab

Original prompt: ” remake the abstract, making sure to follow the guidelines listed in the template very strictly.I also need you to add to the conclusion about how our experimental data for 1/r relation did not match the theoretical. be sure to include how the data range of 3 different distances is not very much data. while you’re doing that, go through my PDF and make sure theres no spelling or grammatical errors.”

I also provided it with a draft of an abstract and an example of what a finished abstract looks like

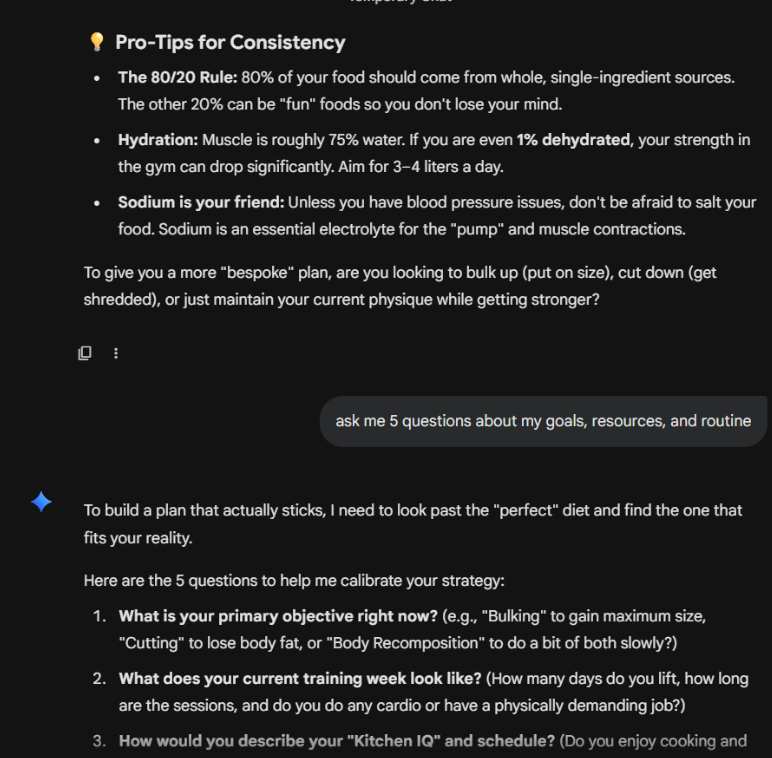

Result: The paragraph returned by the AI did not answer the prompt and complete the task fully. I very clearly asked it to add a part to the conclusion that it fully ignored. It also created a very long conclusion even though I asked it to keep the entire thing short.

Using the UT guide for prompt engineering, I have reworked the prompt to provide me with a much better result.

Reworked prompt:

Role: You are a Professor and an expert in crafting Physics Technical Reports.

Purpose: I am finalizing a lab report regarding the 1/r relation. Your goal is to provide a polished, submission-ready version of this document.

Tasks: Please perform these tasks in order to ensure the highest quality:

- Proofreading: Conduct a comprehensive review of the provided PDF text to correct all spelling and grammatical errors.

- Abstract Revision: Remake the abstract so that it adheres strictly to these template guidelines: [Paste Template Guidelines Here].

- Conclusion Enhancement: Update the conclusion to address the discrepancy between our experimental data for the 1/r relation and the theoretical model. You must explicitly argue that the limited data range—consisting of only three distances—is statistically insufficient to confirm the theoretical relationship.

Tone & Format:

- Tone: Maintain an authoritative, formal, and precise scientific voice throughout the revisions.

- Format: The final output must be a single, fully revised version of the text, integrating all the changes above.

Clarification: Before you begin, do you have any clarifying questions regarding the template guidelines or the specific experimental data provided?

Why this is improved

The UT paper claims that the AI needs to know who it is, who it is talking to, and why it is doing what is is doing (UT Aspire Prompt Literacy page 2). This new prompt establishes the AI’s identity as an expert, identifies the professor/academic audience , and clearly states the goal of a submission-ready report.

By breaking the request into three numbered, sequential tasks, the number of “processing resources” is reduced and the AI needs to do less logic-based work leading to greater accuracy (UT Aspire Prompt Literacy page 2)

Assigning the AI a specific professional persona helps reduce the likelihood of hallucinations and ensures the language is appropriate (UT Aspire Prompt Literacy page 2)

It tells the AI exactly what to include and what tone to use, preventing broad or general responses (UT Aspire Prompt Literacy page 2)