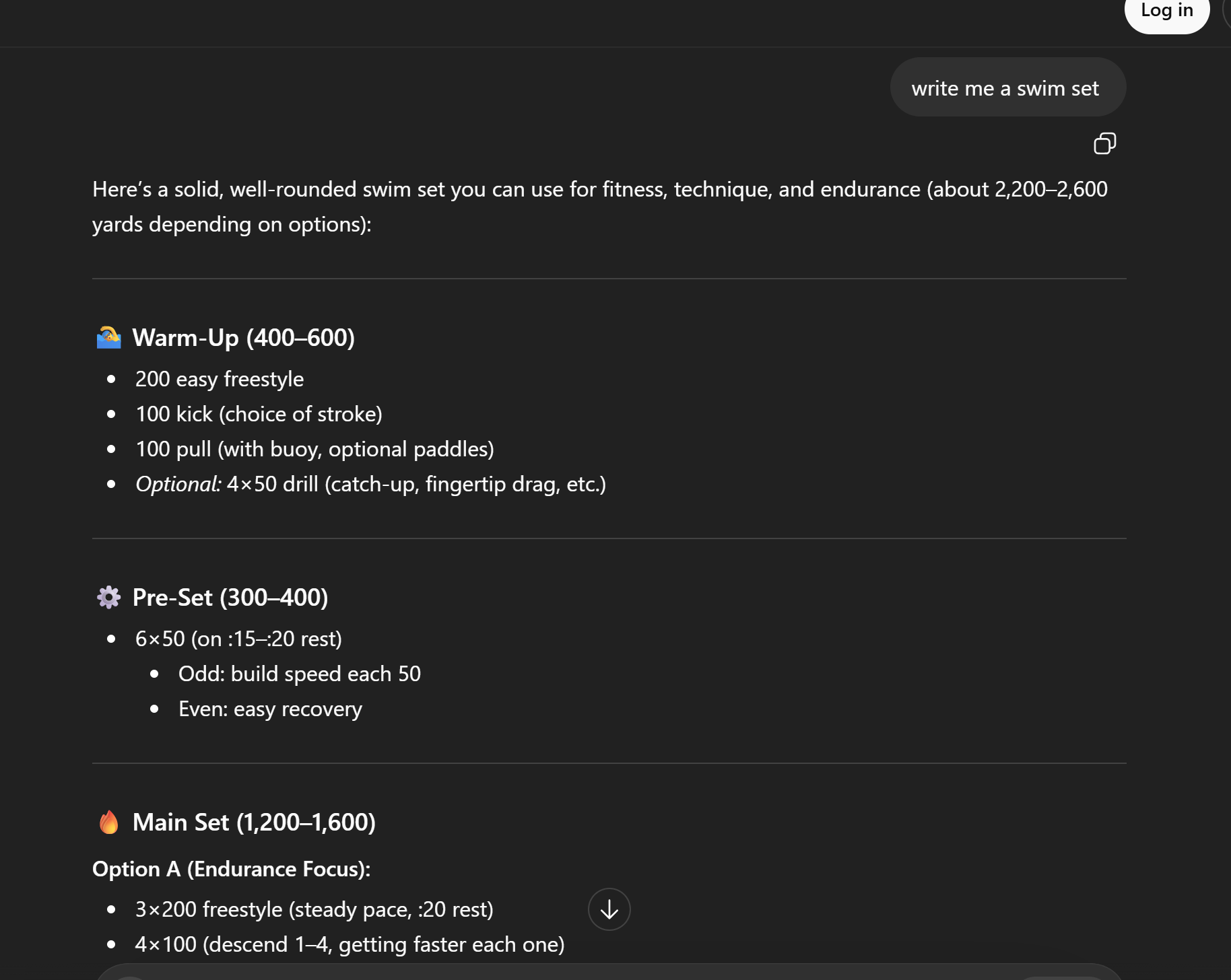

The most useful prompt guideline that I found was the persona pattern. When I first prompted ChatGPT to “Write me a swim set.” I kept it very bland, and in return, I received a response that was just as bland and simple as what I inputted.

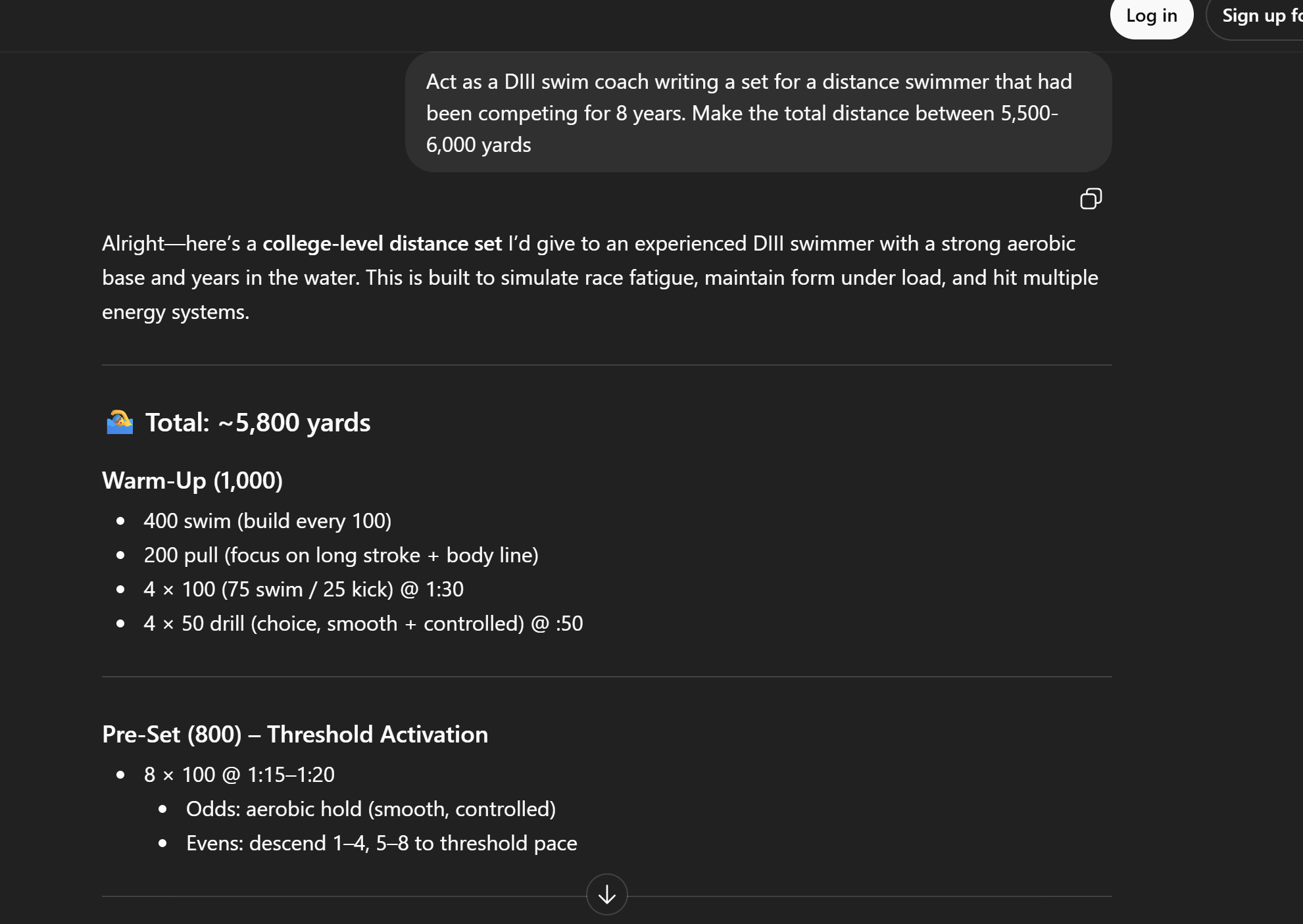

What I received was not what I was looking for and did not match what I had expected. In the following prompt, I assigned Chat the role of being my swim coach and informed it of my history of swimming.

After assigning Chat a role, it was able to write me a much more specific and well-rounded workout to match a Wooster swimmer’s level. Additionally, when prompting Chat the second time around, I gave it specific goals, which seemed to help eliminate the guessing work that Chat was attempting to complete.

Based on what I have learned in this experiment, I can at least confidently say that when asking Chat to create a regimen of some sort, both assigning it a role as well as limits is helpful. Just as stated in the prompting guides, LLMs just predict what you want and offer broad responses, so if you are extremely specific in your ends, you are more likely to meet them (UT Guide). The main thing stated in the UT Guide is that keeping your end goal clear is the most important part of prompting. By assigning Chat a role and setting limits, I was able to match UT’s guide and get an outcome that was much more to my expectations. This can be helpful in the future if I have a specific means and an idea of a product, where I can set limits and expectations while prompting Chat.

Acting as a coach could provide information that other coaches consider, and at the very least, will make the responses look more professional and polished, which I think is a good thing.

I had a similar experience with the persona pattern.

I had very similar experience after giving more details, only differs that gemini was asking me the question about details right aways instead of answering to the first not detailed prompt.